Here is an update on the El Reno Survey project. We have had a modest but highly supportive response so far to our initial solicitation. Twenty individual chasers or chase groups have now submitted survey forms while several others have promised to send theirs in soon. Notably, all respondents have offered to make their video and/or still imagery available to the project database. We thought we might offer an overview of our methods for using storm imagery taken with often-unknown time and location characteristics, and placing it in a framework suitable for research applications.

Time calibration is performed principally through matching lightning flashes recorded on video with cloud-to-ground lightning flashes recorded by the National Lightning Detection Network (http://gcmd.nasa.gov/records/GCMD_NLDN.html). Since our primary research interest is the mesocyclone region of the supercell, we use as our reference database all CG flashes recorded within a 25-km (~15 mile) radius of the mesocyclone center, as determined by radar. The storm was a prolific lightning producer at times, giving many opportunities for matching CG events captured on video to the NLDN dataset. Through this method, we are able to determine the actual time of most video clips, provided that they are of sufficient duration to include several flashes. Then, with unedited video footage provided to us by our survey respondents, we can calculate the time offset of the video camera clock from the time-calibrated segments, and thus fix the times of all other clips as well. Through this method we are able to correct the relative time of video footage to within 1 second of actual time.

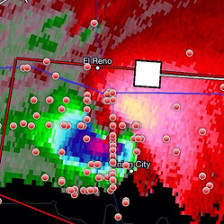

Establishing the locations where stills and video were obtained is generally possible for much, though not all, imagery. Some chasers can provide us with GPS logs, which makes the task relatively easy. Those without such precision mapping are usually able to reconstruct their driving routes, which helps considerably. Our principal tools for fixing locations and estimating the direction of a camera’s field of view are the freely available Google Earth and Google Map software. Satellite views allow us to identify structures and landscape features recorded on the video, with the road network and viewing angle helping to define where the camera must have been located as the imagery was being captured. For video taken from moving vehicles, it is usually possible to count the number of power poles passed after major intersections, or even the dashed paint centerlines on paved roads. The accuracy of these methods varies, but we can generally constrain estimated locations to within 100 meters (~330 ft) of the actual ground point, and often to 10 meters or less.

We will provide further updates and additional insights into our methods and results as the project progresses. In the meantime, we express thanks to all who have responded to our survey thus far, and encourage all other chasers who witnessed this momentous storm to please participate as well.

As a reminder, the survey form can be viewed by clicking this link. The form is in MS-Word format and can be downloaded from the “File” menu on the toolbar.

John Allen, for the project team